Robots

First row

SpoK

We integrated Boston Dynamics' Spot with a lightweight 7 DoF Kinova robot arm and a Robotiq 2F-85 gripper into a legged manipulator. We named the combined platform SpoK.

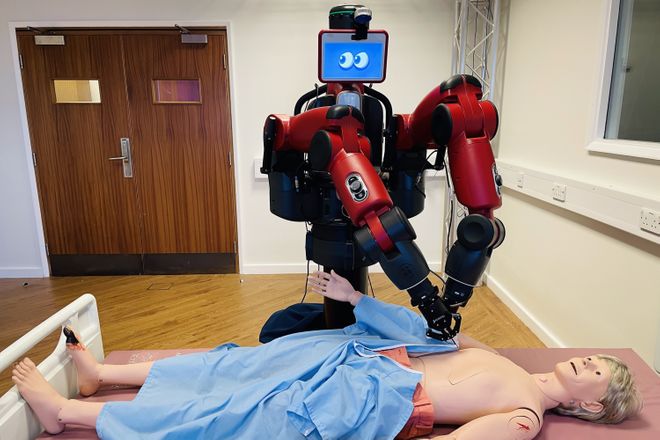

Baxter

Baxter has two 7 degree-of-freedom arms. It is by far the biggest member in our lab and equipped with the Clearpath Ridgeback mobile base and two Robotiq 2F-85 grippers.

Second row

YuMi

YuMi was a game-changer and heralded a new era where people and robots safely and productively work side-by-side, without barriers. Collaborative robots are adept at adding flexibility to assembly processes that need to make small lots of highly individualized products, in short cycles. By combining people’s unique ability to adapt to change with robot’s tireless endurance for precise, repetitive tasks, it is possible to automate the assembly of many types of products on the same line.

Blueberry

Blueberry is our dual-arm assistive wheelchair.

Sixth row

AMIGA

AMIGA (Assistive Mobile Interactive Grasping Agent) is our mobile manipulator combining a UR10e arm, a Robotiq 3f gripper and a mobile base. We use it to provide various types of assistance, from collaborative cooking to moving heavy furniture.

AMIGO

AMIGO is AMIGA's counterpart, identical but equipped with a dexterous Allegro hand.

Seventh row

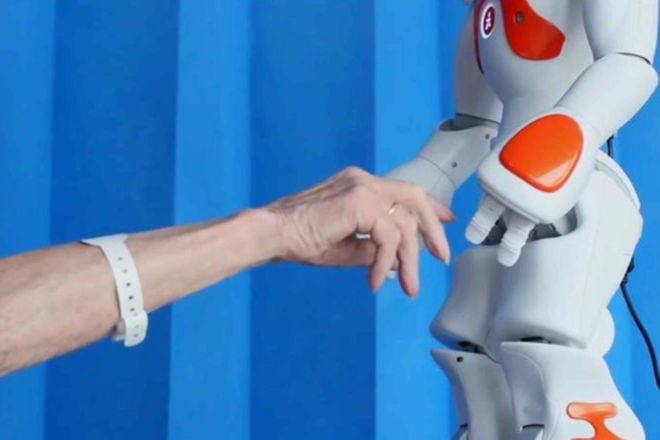

NAO

This small 25 degrees-of-freedom humanoid is being used to teach children with diabetes in a hospital how to lead a healthy lifestyle (ALIZ-e and PAL projects). NAO is unique amongst our robots in that it is the only one that can actually walk. The lab owns two NAOs: zelos and gigio.

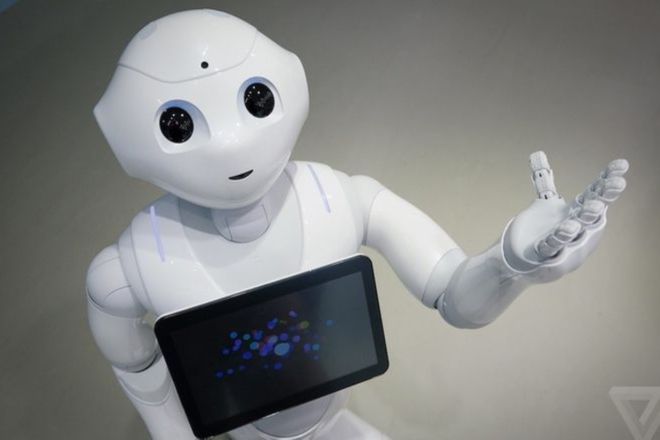

Pepper

Pepper is the world's first social humanoid robot able to recognise faces and basic human emotions.

Third row

ARTA

The Assistive Robotic Transport for Adults (ARTA) is our original smart wheelchair. It was used by Carlson et al. to develop shared control mechanisms for users with disabilities. More recently it has been used by Sarabia et al. to investigate how to develop a wheelchair driving tutor.

Fourth row

P3-AT & Peoplebot & Double Robot

The P3-ATs and PeopleBots are the oldest members of ours lab. They are two differential-drive mobile platforms. Past research with them includes actitivty recognition, motor babbling and multi-robot learning by demonstration. The lab owns 8 P3-ATs and 2 PeopleBots. Currently our Human-Centred Robotics students use them to learn about robotics and to propose novel Human-Robot Interaction experiments. Double Robot is a remote telepresence robot used in our research into companion robots for people with dementia.

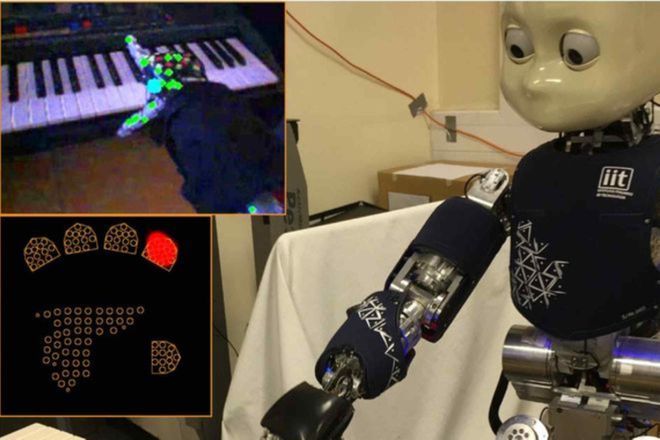

iCub

iCub is a one metre tall humanoid with 53 degrees-of-freedom. It has stereo camera vision and tendon-driven actuators as well as touch sensors in its hands and arms. Our iCub sits on top of an omnidirectional mobile base, iKart; thus allowing iCub to explore its surroundings. Research with the iCub in our group is focused on studying the human-interaction possibilities with iCub and the reactable musical table.

Fifth row

Hololens, Hive, Occulus, Pupil Labs...

Head-mounted augmented reality and virtual reality for explainable human-robot interaction.

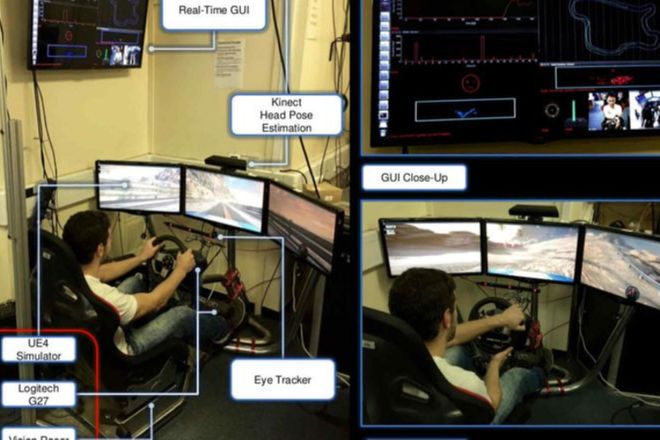

Vision Racer

Vision Racer is used to simulate a safe car-racing environment. It is also equipped with three monitors so that to immerse the driver in the whole experience. A number of sensors are engaged to capture the user's reaction to the virtual environment. The ongoing research in our group concentrates on implementing a personal user model for the driver that will be used to enhance his skills (train) and help him in on future predicted dangerous situations.