Do bots help to spread

fake news?

No, but they could be part of the answer

“A lie can travel halfway around the world while the truth is still putting on its shoes,” wrote Mark Twain – and never has a maxim seemed truer.

Indeed, last year, a study examining the spread of true and false news online suggested that falsehood spreads further and faster than truth across every topic, and that the effect was magnified when it came to political stories.

Against prevailing wisdom, it also found that this was mostly down to humans – not the automated “bots” that many believed were largely responsible for disseminating the material.

And this makes sense: take that quote from Mark Twain. It seems plausible. It has been quoted in countless news stories on the subject of fake news. But there is almost no evidence that Twain actually said it.

Clearly, combatting fake news is a huge task. And if bots aren’t responsible for spreading fake news, could automated systems actually form part of the solution, helping to identify false stories and check their spread?

It’s a question being investigated by several groups at Imperial, straddling a range of different disciplines.

The first task is to come up with a working definition of fake news, which means making an important distinction between misinformation – a neutral term for material that is untrue – and disinformation, a subset that is deliberately intended to mislead people.

Unless you understand how people consume information and learn, you’re not going to be able to have anything more than a conversation in which you’re shouted down or dismissed.

It’s recognised that people may create and spread disinformation for a wide variety of reasons.

“In some cases, it’s just to sell more advertising,” says Dr Julio Amador Diaz Lopez, a Research Associate at Imperial Business Analytics – a centre that connects the Data Science Institute and the Business School – with an interest in misinformation.

“There was a news story about some people in Macedonia who were spreading sensational stories to get clicks through their Facebook stories and earn money.

They didn’t have an interest in modifying public opinion. But, on the other hand, there are state actors who are trying to generate influence and swing elections.”

For Dr Mark Thomas Kennedy, Associate Professor at Imperial College Business School, getting a handle on the phenomenon means understanding people who are “differently informed” and receptive to dubious information from online sources, favouring it over evidence-driven orthodoxy.

"Sometimes it’s about people trying to deceive us, but other times it is people genuinely believing what they are saying and finding others who believe it with them,” says Kennedy.

“And rather than trying to understand those who, for example, go in for the idea that vaccines are dangerous, there’s a tendency for people like me to say, ‘Well, they’re just ignorant.’

“However, from a scientific perspective it’s more useful to say, ‘They’re differently informed’ than ‘How can we prove to them that they’re wrong?’

"We need to find out about the gaps in their background that lead them to these conclusions. Unless you understand how they consume information and learn, you’re not going to be able to have anything more than a conversation in which you’re shouted down or dismissed.”

This was well illustrated in 2016 when Facebook began to include warning icons next to stories that had been disputed by third-party fact-checking websites.

It stopped doing so a year later, after research revealed that the red flags were causing readers to become further entrenched in their beliefs.

“People just got angry and tried to rationalise why Facebook was lying to them, rather than saying, ‘OK, this is a piece of information that’s not real’,” says Amador.

Kennedy stresses the importance of a cross-disciplinary approach to the problem – bringing in expertise from the spheres of the social sciences and philosophy of science, as is taking place at Imperial.

'Language leakages' have nothing to do with the message but could signal if something is suspicious

“Facebook had been approaching this with the idea of ‘Oh well, we’ll just figure out what fake news is and isn’t’,” he says.

“They’re perhaps an example of very smart computer scientists who were themselves differently informed, in that they weren’t so good at realising that these things are not always black and white.”

When done by humans, identifying fake news and working out how it spreads can be hugely labour intensive. Harnessing machine learning and artificial intelligence to help is a holy grail for both academics and the social-media platforms .

In one measure of this, Twitter recently acquired Fabula AI – a startup helmed by Michael Bronstein, Chair in Machine Learning and Pattern Recognition at Imperial, which uses a new class of algorithms to detect misinformation.

Some of the most promising approaches start with a premise that may seem surprising: that AI systems don’t necessarily need to decode or understand a piece of information to work out whether it’s true or false.

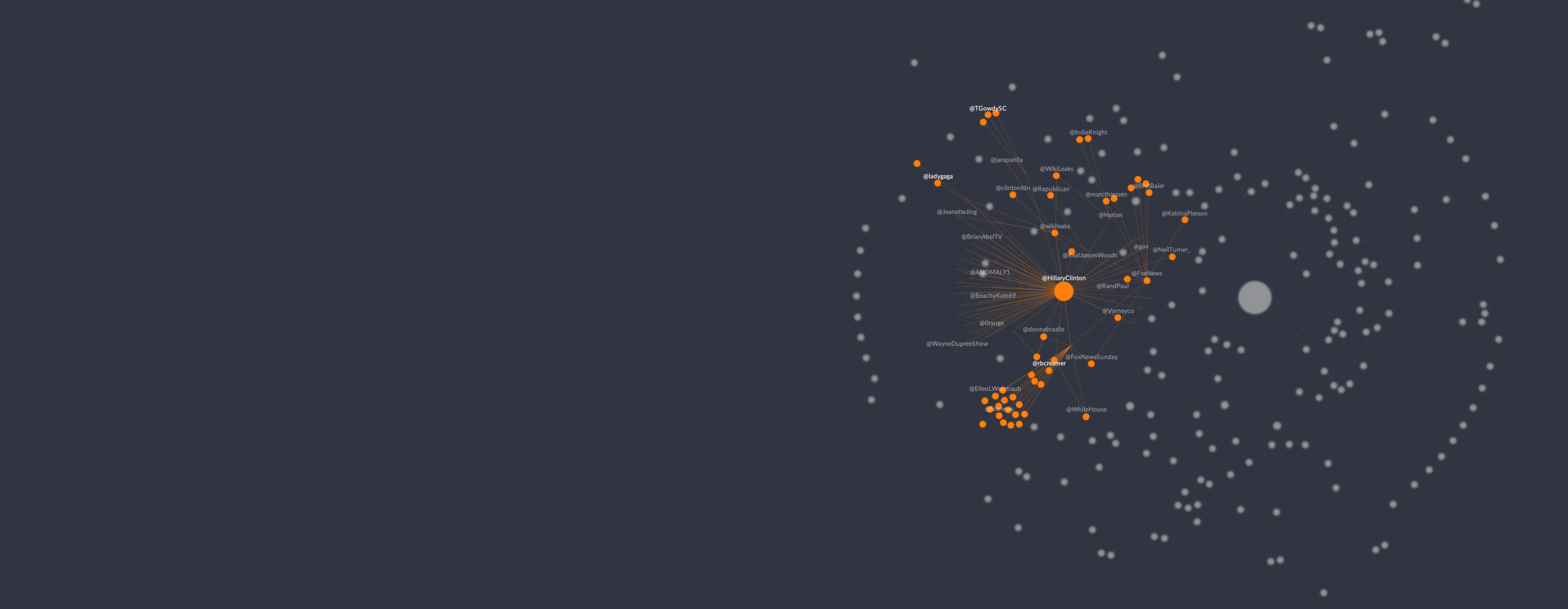

Instead of looking at the “what” – the content of the tweet or post – it may be more profitable to examine the “who” (the person spreading it) and the “how” (the way it propagates).

Miguel Molina-Solana is a Marie Curie Research Fellow at the Data Science Institute, who specialises in data mining and knowledge representation.

He says: “Analysing the content of tweets is very, very difficult. You need to do things such as identifying when someone is just being ironic or is really convinced of the facts – or whether something is simply a typo, or if it’s an intended error to mislead you.

“After some thinking, what we decided was rather than analysing the text, why don’t we analyse features around the tweet?

How many followers do you have, how many capital letters are you using, and how many emojis are you putting on the tweet?

All these things have nothing to do with the actual message, but there could be signals there for identifying or at least giving a hint if something is suspicious.”

Amador, who has been leading this strand of research, says: “These ‘language leakages’ are able to be detected by a computer but not by a human. And machine learning is also very good at looking how people are diffusing the information.”

If, as the MIT study suggests, misinformation spreads in a different way from accurate material – rather as cancer cells can be distinguished from healthy ones by the way they divide – then this “signature” could be the key to finding and stopping it.

We have to look at how our interactions on these new platforms are revealing new aspects to the way we construct our social world

Machine-learning researchers at Michael Bronstein’s group at Imperial have been looking at a technique called “geometric deep learning”, which is capable of dealing with the messy datasets generated by social media to achieve this.

They showed that fake stories (as determined by professional fact-checkers) could be differentiated with high accuracy from true ones after just a few hours of diffusion on Twitter, by learning their spreading patterns.

An advantage of this approach is that it is language independent: it’s possible to apply the same techniques to stories in English, Russian or Chinese.

It can even offer judgments on the provenance of a piece of information where its content is not available, as in the case of social networks that offer end-to-end encryption.

However, almost all researchers agree that removing humans entirely from the detection process is neither practicable nor desirable.

“Machine learning as we use it is very dependent on human decisions,” says Amador, “and fake news is a human activity, so humans should be involved.”

And beyond this, dealing with the what, who and how of online misinformation still leaves the biggest question – why? For the foreseeable future, it’s not one that machines will be in a position to answer.

Kennedy says: “Fake news is an applied problem for information theorists, computer scientists and intelligence researchers, but it’s also a basic-level social science question.

“Understanding what makes a social group, and what makes a set of people coherent as a culture, is incredibly relevant to understanding why some people want to believe the world is flat.

"We have to look at how our interactions on these new platforms are revealing new aspects to the way we construct our social world.”

Imperial is the magazine for the Imperial community. It delivers expert comment, insight and context from – and on – the College’s engineers, mathematicians, scientists, medics, coders and leaders, as well as stories about student life and alumni experiences.

This story was published originally in Imperial 47/Winter 2019-20.