Imperial robots helping humans in the home, clinic and over land, sea and air

Dr Mirko Kovac at Imperial Festival

Imperial has one of the largest robotics research clusters in the UK and a new network will help leverage that technology for the benefit of humanity.

There are few areas of technology that capture the public’s imagination quite like that of robotics. Science fiction is probably partly responsible, with the promise that one day we might all lead lives of leisure and health with robots dealing with any demanding tasks.

The Robot Zone

The truth is that robots have been playing an ever more important role in industry and labour ever since the mechanisation of the textile loom in the 19th Century. But recent advances – including significant work at Imperial – is ushering robotics out of the shadows of the factory floor into the ‘real’ human world. Some of these developments were showcased at the Imperial Festival last month in the new dedicated Robot Zone in the Sir Alexander Fleming Building – which also served as a launchpad for the new Imperial Robotics Network (see box right). We spoke with just a few of the researchers working in the network to find out what’s coming next in the world of robotics.

In the home

Most of the robots in operation today are found in very controlled environments such as on assembly lines in automotive plants, where they work within a strict set of parameters on a range of repetitive tasks. They are not very good at reacting to changing circumstances, for one quite simple reason: they are effectively blind.

Andrew Davison (left)

“The whole question of how robots are to understand enough about the world to act autonomously and intelligently within it, is the area I’m interested in,” says Andrew Davison (Computing), Professor of Robot Vision. “You can call it perception, or more specifically vision, because that is the most interesting and powerful sense.”

So can we just strap a digital camera on to robots’ bodies and let them get on with it? Yes and no, says Andrew.

He and his team make a point of using fairly standard digital imaging technology but the real challenge is in making sense of that input. “Really all a robot sees is a string of 0s and 1s – we have to train it to be able to identify something as a shoe or a wall, for example,” Andrew says.

First, a robot must be able to map its surroundings accurately, then place itself within that map and update as it moves around – something the academic community terms Simultaneous Localisation and Mapping (SLAM). It does this by identifying key features and landmarks in the scene and estimating their position based on probability theory. As the robot gathers more data from different angles, it gradually builds up a more assured map.

Andrew has been working for some time now with British firm Dyson with a view to developing domestic robots that might perform a range household functions such as cleaning, vacuuming and maintenance. Current domestic robots are rather inefficient and dumb – relying on trial and error mostly. In February this year a new collaboration was announced with the £5 million Dyson Robotics Laboratory, with top robotics researchers being recruited from around the world. The aim is to get prototypes out in a few years’ time.

Perhaps then we can finally put our feet up.

In the clinic

Historically, Imperial has pioneered the use of robotics in surgery – St Mary’s was the first hospital in the UK to host the ground-breaking da Vinci surgical robot, and the first in the world to use it for heart bypass surgery in 2001. During such procedures a large robotic station equipped with mechanical manipulators and scalpels is remotely controlled by a surgeon sitting at a separate console.

The Hamlyn Centre for Robotic Surgery at the South Kensington Campus now represents an epicentre for new research in this area – feeding through to Imperial's hospital campuses. As well as hosting a da Vinci surgical robot, the Hamlyn Centre is also developing bespoke robotic solutions for various different surgical and clinical procedures – such as unblocking the main artery serving the heart through the insertion of a stent.

At present the surgeon makes a small incision in the groin and feeds through a catheter manually by hand, largely judging position by touch and feel – with the help of X-ray fluoroscopy imaging.

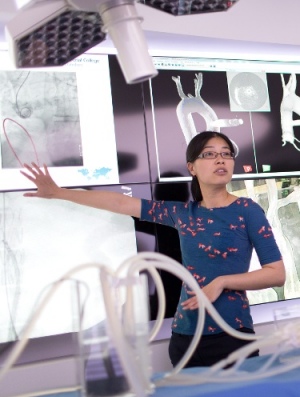

Su-Lin demonstrates robotic vascular surgery using model vessels

“It requires considerable skill, something that really only the senior surgeons can do after performing hundreds of these operations; the learning curve for surgical interns is very steep indeed,” says Dr Su-Lin Lee (Computing).

Using a small robotic wire-feeder Su-Lin is trying to automate the navigation procedure. She takes data from MRI scans of patients’ main blood vessels then programmes the robot’s ‘brain’ so it knows the path the catheter must take through the body – even taking into account for deformation of vessels due to breathing.

“The robotic navigation techniques we’re developing should allow for a more consistent procedure with greater stability and easier manipulation,” says Su-Lin, who has been testing her system with model vessels and hopes to move to patient trials soon.

While robotic surgery research continues apace, robotic devices are also starting to find their way into other diverse areas of healthcare – including for rehabilitation, neuro-prosthetics and assistive technology.

Etienne Burdet (Bioengineering) is Professor of Human Robotics and one of his many research interests involves helping stroke patients recover some of their physical mobility. Often this is done with quite simple robotic levers that patients move to interact with a game on a computer screen. The games are incentive-based and the robotic devices can be adjusted to deliver varying degrees of mechanical assistance, depending on the patient’s impairment and level of recovery. It’s affordable technology that is currently being developed with Imperial Innovations for use in various care settings and even in patients’ homes.

“A patient might be asked to simulate an everyday task using our tools, such as opening a lid. We can then examine their performance and encourage them to repeat or change their movements,” Etienne says.

Another robotics solution – on display at the Imperial Festival last month – is designed for people who can’t move their arms or legs at all. Dr Aldo Faisal (Bioengineering/ Computing) has developed a device that can be attached to a wheelchair, which alongside a laptop, allows people to drive around using just their eyes. Although similar systems allowing people to steer with their eyes exist, the new set-up is unique in that it can discern if the user is looking in a direction and actually wants to move there – or simply looking around to assess their surroundings.

Another robotics solution – on display at the Imperial Festival last month – is designed for people who can’t move their arms or legs at all. Dr Aldo Faisal (Bioengineering/ Computing) has developed a device that can be attached to a wheelchair, which alongside a laptop, allows people to drive around using just their eyes. Although similar systems allowing people to steer with their eyes exist, the new set-up is unique in that it can discern if the user is looking in a direction and actually wants to move there – or simply looking around to assess their surroundings.

Initial tests carried out by researchers at the Imperial Festival suggest it is intuitive and responsive. “All the smartness is in the software,” says Faisal, adding that the device could cost no more than £50 and be on the shelves in 3 years’ time.

Over land, sea and air

Some would argue that we’re already surrounded by the ultimate robots – perfectly adapted to their environments; their design honed over millions of years.

Dr Mirko Kovac (Aeronautics), Director of the Aerial Robotics Lab, looks to nature when designing his small, autonomous, lightweight robots that could potentially be deployed in swarm formation for exploration, sensing and even construction.

“I’m fascinated by the beauty of natural solutions; it’s something that drove me to go into robotics and work at this interface between biology and engineering,” Mirko says, adding: “at the moment we can’t compete with the level of energy efficiency that biology achieves routinely.”

Mirko and his team have built and demonstrated various bio-inspired robots to perform certain tasks: such as a ‘grasshopper’ robot that can jump 27 times its own height with gears and springs; a gliding robot that steers towards light using special shape-changing alloys; and a butterfly inspired glider propelled by a tiny three-stage rocket.

Mirko and his team have built and demonstrated various bio-inspired robots to perform certain tasks: such as a ‘grasshopper’ robot that can jump 27 times its own height with gears and springs; a gliding robot that steers towards light using special shape-changing alloys; and a butterfly inspired glider propelled by a tiny three-stage rocket.

“We address different key challenges, demonstrate prototypes, then move to another key challenge; and as we go along we integrate certain features into more complete systems,” Mirko explains.

One such system currently in development is an aerial-aquatic robot inspired by diving birds and flying fish. The team has drawn up an early design with a mass of around 2.6g that would propel itself 4.8m out of the water using a water jet propulsion mechanism. A fleet of these would work together to survey waters polluted by an oil spill for example.

At the Imperial Festival Mirko and team also showcased a new prototype aerial robot using a quad-copter capable of 3D printing a foam ‘nest’ for itself to land on and perform tasks. The concept could find a use in maintenance and building in remote or hostile locations such as offshore wind farms and decommissioned nuclear plants.

At the Imperial Festival Mirko and team also showcased a new prototype aerial robot using a quad-copter capable of 3D printing a foam ‘nest’ for itself to land on and perform tasks. The concept could find a use in maintenance and building in remote or hostile locations such as offshore wind farms and decommissioned nuclear plants.

Who knows, perhaps someday we might see our city skylines dotted with airborne insectoid builders, busy day and night, assembling the skyscrapers of the future.

Article text (excluding photos or graphics) © Imperial College London.

Photos and graphics subject to third party copyright used with permission or © Imperial College London.

Reporter

Andrew Czyzewski

Communications Division