Robotics revolution

For the past century,

we’ve been bombarded

with messages about a

future in which robots

take over the world, and

ultimately destroy us.

But behind the scenes,

they're playing an

increasingly important

role in our lives.

Robots are already being used to pick and pack our online deliveries, vacuum our homes, and enable surgeons to carry out life-saving operations. In the coming decades, robots have the potential to further enhance human capabilities, providing better, more individualised care; cheaper, cleaner, and more efficient transport; and tackling mundane, repetitive and unpleasant tasks in unstructured environments like our cities, homes and hospitals.

Realising the full potential of robotics is a major challenge, since it requires designing robots with the ability to adapt to humans and to unpredictable or rapidly changing situations with great physical robustness and dexterity. It will require collaboration between different scientific disciplines and industry, and public debate about the roles robots should play in our lives.

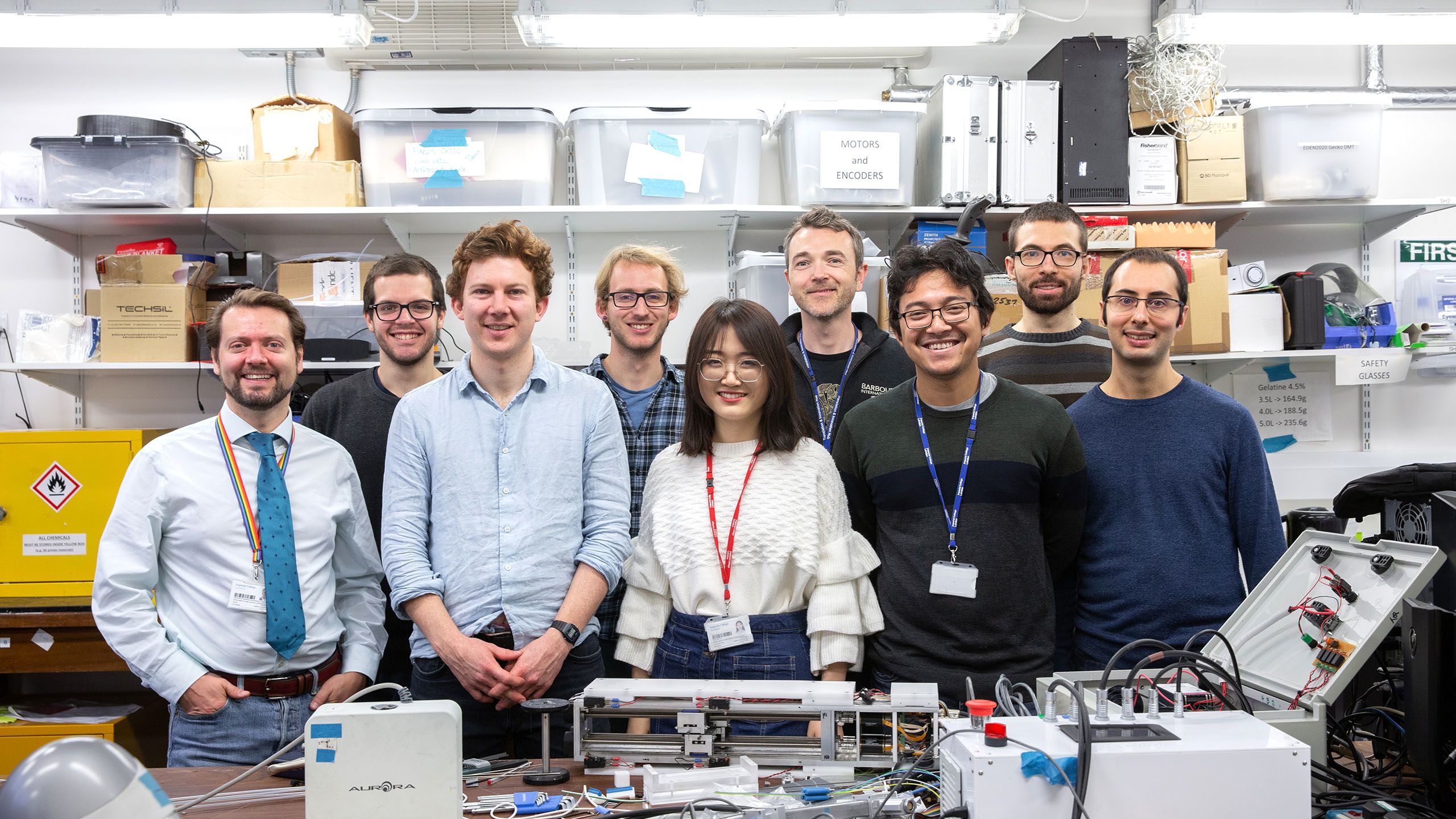

Professor Ferdinando Rodriguez y Baena (left) with team members - just a few of Imperial's 350 robotics researchers.

Professor Ferdinando Rodriguez y Baena (left) with team members - just a few of Imperial's 350 robotics researchers.

Robot-assisted surgery

Healthcare is an area in which the benefits of robotics are already being felt, and Imperial pioneered some of the very first robots for surgery in the 1990s. Professor Brian Davies, in the Department of Mechanical Engineering, developed the world’s first special purpose medical robot, called Probot, which was used for prostate surgery in 1991. In the same year, Professor Davies began collaborating with Professor Justin Cobb of Charing Cross Hospital on research into orthopaedic robotic surgery, resulting in a company, ACROBOT, that was active for the next 12 years.

Professor Brian Davies. Photo: Ian Smithers

Professor Brian Davies. Photo: Ian Smithers

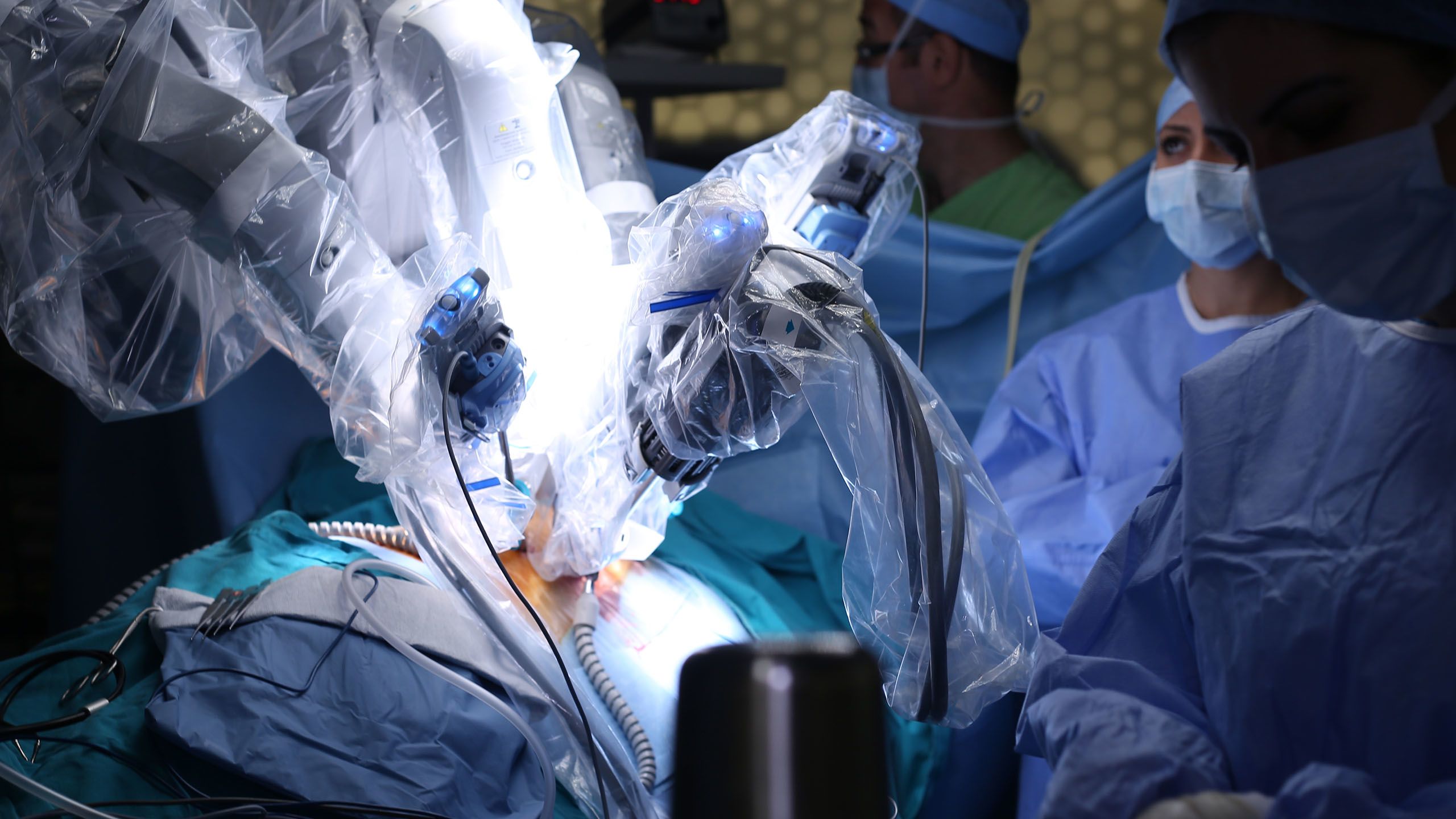

In 2001, another early surgical robot – the Da Vinci surgical system – was installed at St Mary’s Hospital, part of the Imperial College Healthcare NHS Trust. It has since spread to more than 70 NHS hospitals across the UK. The robot acts as an extension of the surgeon's hands, miniaturising their movements and reducing any shaking. It is primarily used to carry out procedures such as removal of the prostate gland and associated tissue (prostatectomy), heart valve repair and gynaecologic surgery through tiny incisions. “There’s now substantive evidence that using a robot for prostatectomy will leave you with better margins – meaning you take most of the bad stuff out while leaving all the good stuff in – and with a substantially reduced risk of impotence or incontinence,” says Professor Rodriguez y Baena. However, these robots were only the beginning .

The purpose of all this technology is not to replace surgeons, but to augment their capabilities and free up time, which surgeons can use to plan and carry out more complex operations.

Imperial researchers are developing the STING probe (standing for Soft Tissue Intervention and Neurosurgical Guide) – a flexible robotic needle that can be steered through the brain to deliver drugs and diagnostic sensors to areas that are currently inaccessible because of the high risk of brain damage, e.g. to treat deep-seated brain tumours. “At the moment, the way we deliver drugs inside the brain is via rigid cannulas, but if there's something between your entry point and the deep-seated target that you don't want to intersect or damage, you cannot operate,” says Professor Rodriguez y Baena, who also heads Imperial’s Mechatronics in Medicine lab, which developed the device. “With a flexible catheter you can steer around it and still get to where you want to get and leave the catheter in place to enable future therapy without another surgery.”

The needle is inspired by a long hollow tube called an ovipositor, which female wood wasps use to lay their eggs. Consisting of a number of interlocking segments, which slide against one another to drill into the bark of trees, it is also flexible enough to be steered around obstacles. Together with collaborators in Italy, Germany and the Netherlands, under the auspices of a European consortium granted codenamed EDEN2020, the Imperial team is currently testing the robotic needle in sheep as part of a broader neurosurgical platform that should allow surgeons to plan a route, navigate and then deliver drugs to deep within the brain.

The lab is also developing systems to prevent human surgeons from accidentally straying into delicate tissue regions by physically nudging them to keep them within a pre-operatively defined area, as well as optical techniques and machine learning algorithms to seamlessly integrate such systems into the operating theatre, avoiding the need for the medical team to spend time setting them up for each patient. “The purpose of all this technology is not to replace surgeons, but to augment their capabilities and free up time, which surgeons can use to plan and carry out more complex operations,” Professor Rodriguez y Baena says.

Imperial’s Robotics Forum aims to facilitate collaboration by bringing together 41 laboratories, 44 principal investigators and more than 300 postdoctoral researchers and students from various fields of robotics.

“The Robotics Forum allows cohesive networking activities between researchers at Imperial, and between Imperial and other universities, industrial partners, government bodies and the wider public,” says Eloise Matheson, the Forum’s manager.

“Robotics is something that you can do alone, but it doesn’t work very well. Really, you need to integrate with people from other fields to get ideas or expertise,” says Professor Etienne Burdet, who co-established the Forum in 2015. Its efforts can be grouped into three broad thematic areas: healthcare, infrastructure, and domestic and assistive robots.

“These are the key applications for which we think we have a competitive advantage and where we can offer something that industry and wider society could benefit from,” says Ferdinando Rodriguez y Baena, Professor of Medical Robotics and the Forum’s spokesperson.

Rehabilitation robots

Useful as surgical robots are, at this stage they are still just an extension of the human surgeon: He or she provides instructions which the robot follows. However, developing a more interactive relationship between man and machine might enable robots to be used in other areas of medicine, such as rehabilitation after a stroke or spinal cord injury.

Members of Imperial’s Human Robotics Group (HRG) have already developed a ‘virtual physiotherapist’ called gripAble, which helps patients with arm paralysis to improve their range of motion. It is being further developed and marketed by an Imperial startup company with the same name. Consisting of an electronic grip that the patients squeeze, turn or lift, the device translates even tiny movements of patients’ hands or arms into meaningful movements on a tablet screen, which enables them to play computer games whilst also training their arm strength. This kind of repetitive, task-specific exercise is the only intervention which has been shown to improve arm function in stroke patients, but the amount they receive is often limited by the cost and availability of physiotherapists. So, the HRG is developing devices to increase the amount of physical therapy available to them.

Ideally, rehabilitation robots would learn how individuals move, and then use this knowledge to tailor training to them – as well as modifying it as their physical condition changes, just like human physiotherapists do. “The challenge is to shape this two-way interaction – particularly when we don’t understand the motor control of the individual patient,” says Professor Burdet, who leads the HRG.

He and his colleagues have been studying how forces are exchanged between two people when they work together on a physical task. Their research suggests that the brain constructs a model of the partner’s movements that it then uses to improve its performance; for instance, the physiotherapist will build a model of the patient’s motor control. If robotic devices can be designed to mimic this process, they may enable people to make greater progress during rehabilitation.

Professor Burdet and colleagues are now examining how interactions between individuals can affect task outcomes using the branch of applied maths known as game theory. Imagine you are playing a game of chess: your success will depend on both the moves you make, and the moves of your opponent – and the same goes for your opponent. Game theorists model situations to make predictions about the various outcomes, such as the chances you will win this chess game based on your current strategy. Professor Burdet’s team is using game theory to develop algorithms that will allow robots to make predictions about a patient’s goal from their movements and adapt their behaviour to assist them. They are currently collaborating with researchers in Asia and Europe to test these algorithms in physical rehabilitation robots.

Professor Etienne Burdet with members of the Human Robotics Group, who are designing robotic devices to assist with neuro-rehabilitation.

Professor Etienne Burdet with members of the Human Robotics Group, who are designing robotic devices to assist with neuro-rehabilitation.

Robot construction teams

The application of game theory to robotics could lead to improvements in other areas as well, such as the use of robots to build and repair infrastructure in cities or environments such as underground, offshore, or in space. Infrastructure robotics describes the development of machines to take over the maintenance, inspection and repair of offshore oil and gas platforms, wind turbines, bridges, damns and buildings, or their deployment in hazardous environments such as nuclear storage facilities. Currently, most of these tasks are done by humans, exposing them to various dangers including height, hazardous chemicals and gases, heat, wind or rough seas. “It also often requires facilities to be shut down for long periods of time while these operations are carried out, which has enormous cost implications,” says Dr Mirko Kovac, director of the Imperial Centre of Excellence for Infrastructure Robotics Ecosystems (CEIRE). “There’s a very strong case for using robots to address some of this.”

Robots could also be sent into disaster areas, for example in the wake of an earthquake or nuclear accident, to assess and repair structures or rescue human victims.

Indeed, it is estimated that doing so could reduce maintenance costs for infrastructure by 20–40 per cent, as well as improving worker safety. Robots could also be sent into disaster areas, for example in the wake of an earthquake or nuclear accident, to assess and repair structures or rescue human victims. Remotely-operated drones are already sometimes used in situations like these to carry out visual inspections before sending in human teams. However, in some settings, for example collapsed buildings, it is impossible to maintain a communications link. In cases like these, semi-autonomous teams of robots could be sent into hazardous situations to carry out tasks themselves, perhaps under the supervision of a human commander.

Control engineering, which concerns the use of sensors, actuators and feedback to make a system behave in a desired manner, is at the heart of autonomous robotics. Yet, ensuring reliable autonomous operation of teams of robots is challenging. “In traditional applications, there has been one central computer that controls everything a robot does, but when you have many individual robots with small and not very powerful computers on board and which, in addition, are subject to limited communication, control becomes quite challenging,” says Dr Thulasi Mylvaganam, a lecturer in Imperial’s Department of Aeronautics.

Eventually, we imagine that there will be ecosystems of different robots working together.

Dr Mylvaganam has worked on several topics in nonlinear control and found control methods based on ideas from game theory particularly useful for tackling problems involving the autonomous operation of teams of robots. For instance, she has developed and applied game theory-based strategies for motion planning for multi-agent systems, and with her team used the result to design feedback strategies for autonomous coordination of wheeled robots. The resulting collision- and deadlock-free navigation of teams of robots has been experimentally demonstrated in challenging environments with several obstacles.

She and her team are currently working on developing control methods applicable for situations in which communication between robots is limited. In such settings, the overall behaviour of the team must be shaped by individual actions based only on individual measurements and local interactions. The development of such control methods is crucial for enabling future technologies in which cooperative teams of robots play a more central role. Although the practical applications of this technology are still some way off, enabling robot collaboration would vastly increase their capabilities. “Eventually, we imagine that there will be ecosystems of different robots working together – and the development of multiagent systems will be part of this,” says Dr Kovac.

Also necessary is the design of new hardware to facilitate these robot-robot interactions. For instance, former Imperial aeronautics engineer Dr Pooya Sareh, from Dr Kovac’s group, recently demonstrated an origami-inspired cushioning system that would allow drones to dock with autonomous vehicles, for example to deliver medicines to moving ambulances, without damaging themselves or the ambulance. This cushioning would also protect the drones and keep them airborne if they did accidentally crash into something.

Dr Thulasi Mylvaganam (left) with PhD student Benita Nortmann

Dr Thulasi Mylvaganam (left) with PhD student Benita Nortmann

Spider-drones

Meanwhile, Dr Kovac, who also heads Imperial’s Aerial Robotics lab, has been developing ways of landing robots on vertical structures, such as wind turbines. This is essential if robotic repair teams are to be deployed on offshore sites without humans having to place them there.

To design these unconventional machines, Dr Kovac drew inspiration from nature – in this case spiders. “Spiders can perch on structures like trees using strings, and robots can use similar methods to attach themselves to offshore wind farms,” he says. Working with other academic and industrial collaborators as part of the Offshore Robotics for Certification of Assets (ORCA) hub, Dr Kovac and his team has created an autonomous drone that can attach itself to offshore wind turbines using a magnetic anchor and a spool of thread. Once attached, the robot can then carry out inspections or repairs without a human controller needing to be stationed nearby.

Getting drones out to these sites is another challenge. Current models are often unable to fly in high winds, which are common at sea, and even on relatively calm days there may be local spots of turbulence. The last thing you want is for your expensive repair robot to floored by a rogue gust of wind. Equipping aerial robots with strategies to deal unexpected conditions is therefore essential.

“When you see a physical robot starting to behave like the animal, you know you are on to something.

Here too, nature can help. Dr Huai-Ti Lin’s lab at Imperial has been studying how dragonflies manage to stay airborne in real-world conditions. “One thing we’ve discovered is that the dragonfly’s highly corrugated wings contain an extensive network of sensors that are intimately integrated into the mechanical structure of the wing,” Dr Lin says. Ridges on the wings seem to channel the air and allow local fluctuations in airflow to be detected by strategically placed sensors. Such construction enables the sensory system to distinguish local circulation from the high-speed airflow created by the insect’s flight. A better understanding of how dragonflies integrate these sensory signals and adapt their flying behaviour may enable the design of intelligent wings and more robust aerial robots.

The beauty of studying insects is their relative simplicity. Their small brains suggest it may not be necessary to deploy vast numbers of expensive sensors and computational equipment to achieve impressive physical feats. “There’s a strong evolutionary selective pressure for doing only the things that are needed, efficiently,” says Dr Lin. Another example of this is dragonfly vision. Dragonflies are successful aerial predators, able to intercept flying prey in a matter of a few hundred milliseconds – roughly how long it takes us to blink. They quickly and effectively negotiate the world without having an explicit understanding of each object they encounter.

Dr Lin’s team has been dissecting the movements of the dragonfly’s eyes, bodies and wings during flight, to understand how such visual guidance is achieved. In the process, Dr Lin’s group develops bio-inspired motion detection and tracking algorithms for machines. These algorithms are currently being tested in micro-racing drones and other terrestrial autonomous robots. “When you see a physical robot starting to behave like the animal, you know you are on to something. Intelligent or not, intelligent behaviours are the basic requirement of a modern robot,” Dr Lin says.

Robot learning

Creating robots that can not only autonomously navigate through a changing and unpredictable world, but also interact with the objects and people in it, may require equipping them with a very human quality: the ability to learn. This is particularly true of robotic assistants that may someday help us with everyday tasks in the home, such as carrying large objects, fetching groceries, or accompanying us to the park for a game of football.

“Humans are capable of doing many different things and we’re also able to learn new skills very quickly, by leveraging our prior knowledge. These things are very difficult even for state-of-the-art algorithms,” says Dr Petar Kormushev, director of Imperial’s Robot Intelligence Lab.

Traditionally, roboticists have had to define every part of a robot’s control manually, specifying the motion of every joint and sensor. But this is extremely time-consuming and creates very rigid and inflexible robots. “No matter how we do it, if we must manually programme each robot to do every task that we might want them to do, it will be impossible,” Dr Kormushev says. “Rather, we are trying to find ways to make algorithms more efficient, so [the robots] can reuse what they already knew from before and then reapply it when they’re facing some new problem.”

Dr Kormushev has taught one of his oldest robots to take a lunch order, acquire the food from the canteen and bring it back to him, all by itself.

They have already taught a robot to flip a pancake, using reinforcement learning algorithms that involve telling the robot the goal of the task – tossing a flat pancake into the air, making it spin 180⁰, and then catching it again – and then allowing it to figure out how to achieve this goal through multiple trials and errors.

The algorithm that Dr Kormushev’s team wrote was so effective that the robot learned to flip a pancake in fewer than 50 attempts. They have also taught robots how to shoot a hockey puck to hit any target, walk at different speeds, jump, and climb up stairs or over obstacles. Dr Kormushev has even taught one of his oldest robots – a modified electric wheelchair with a tablet screen face called DE NIRO – to take a lunch order, autonomously navigate its way to a different floor of the building, acquire the food from the canteen and bring it back to him, all by itself.

DE NIRO was designed to be a jack-of-all-trades robot, the sort that might someday end up in our homes. If it did, this ability to learn and adapt to new environments would be essential given every home is different and things get moved around by the humans living in it. “Instead of a robot that has pre-programmed skills, we could teach the robot how to tidy up the house the way we like it, what time to do certain tasks, and things like that,” Dr Kormushev says.

Dr Petar Kormushev taught Robot DE NIRO (pictured in background) to pick up a lunch order from a different floor. Here Dr Kormushev (centre) is pictured with undergraduate students in his Robot Intelligence Lab.

Dr Petar Kormushev taught Robot DE NIRO (pictured in background) to pick up a lunch order from a different floor. Here Dr Kormushev (centre) is pictured with undergraduate students in his Robot Intelligence Lab.

Robots around us

Professor Andrew Davison leads Imperial’s Dyson Robotics Lab, which was founded in 2014 following his collaboration with Dyson on the core computer vision algorithms used for localisation and mapping in the Dyson 360 Eye robot vacuum cleaner. Professor Davison believes that mass-market robotic products could become an integral part of future homes and societies, and technologies the lab is researching could open up whole new applications.

“There is a lot of hype around artificial intelligence. But AI is only real intelligence if it is embodied or rooted in the physical world,”

The Dyson Robotics Lab does fundamental research on robot localisation, mapping, scene understanding and interaction, primarily driven by computer vision algorithms using probabilistic estimation and machine learning. A key goal is general, efficient understanding of the shapes and object contents of rooms or other scenes to enable general, long-term intelligent action. “It is an important moment in robotics research,” says Professor Davison. “Improvements in perception and interaction algorithms are allowing new robot concepts and products which break away from repetitive actions in controlled environments like factories and aim to operate robustly in the complex wider world.”

If we’re going to live alongside robots, they’ll need to cope with our unpredictability, even if we don’t want them to be unpredictable themselves. The same goes for robots unleashed into any real-world environment. Designing such machines will require the integration of expertise from across mathematics, computing, physics and biology. “There is a lot of hype around artificial intelligence. But AI is only real intelligence if it is embodied or rooted in the physical world,” says Dr Kovac. “It is really about bringing data, control, and the physical body together. That’s what robotics is.”

The challenges are many, but by encouraging collaboration across disciplines, and with industrial and international partners, Imperial is well-placed to achieve this. The future of robotics hinges upon partnerships – both between those developing the robots, and between humans and the robots themselves. Rather than taking over the world and destroying us, robots have the potential to extend human capabilities by providing complementary capabilities and skills. Imperial is committed to developing machines which embrace this collaborative spirit.

Linda Geddes is a Bristol-based freelance journalist writing about biology, medicine and technology. She spent nine years at New Scientist working as a news editor, features editor and reporter, and remains a consultant to the magazine.

All photos by Thomas Angus, except photos of The Terminator, Professor Brian Davies, and the Da Vinci robot (see captions).

This Imperial Story was produced by the College's Enterprise Division.

The Enterprise team helps businesses to solve their challenges by accessing Imperial's expertise, talent and resources, and helps academics and students find new ways to turn their expertise into benefits for society.