Many real networks are either too large or complex to be analyzed directly. Therefore, networks are often simplified or sparsified prior to or during the analysis, in a way that still preserves their structural properties. Such abstraction techniques are usually called network backbones or skeletons.

Possibly the simplest approach for network abstraction is computing its spanning tree [1]. Although somewhat simplistic, spanning trees computed with a proper algorithm already preserve many common properties of real networks, such as their small-world and scale-free structure. However, they cannot preserve network clustering or community structure.

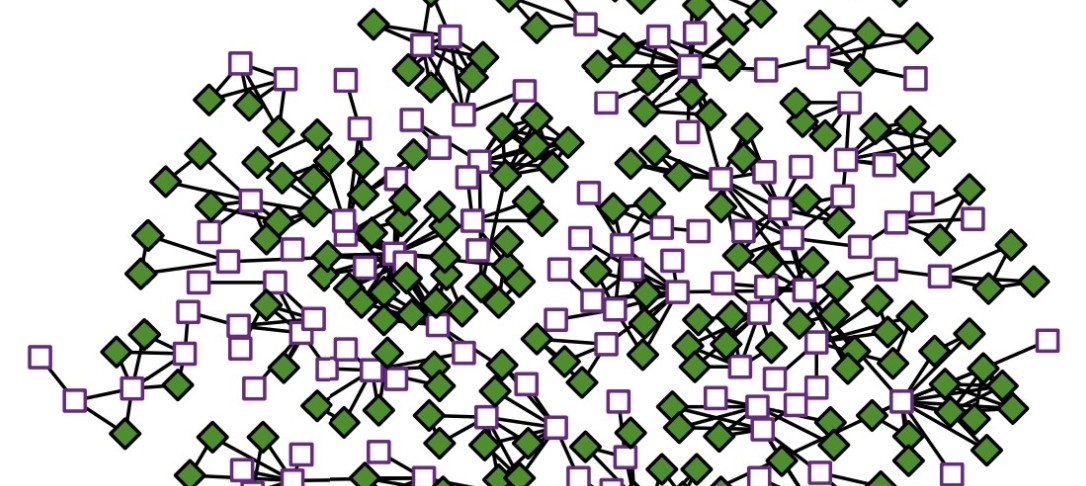

A suitable alternative is a convex skeleton [2], which is a generalization of a spanning tree in which each edge can be replaced by a clique. Convex skeletons preserve most common properties of real networks, but they are much more difficult to compute.

In this talk, I will first present different network backbones and skeletons, and then empirically compare them on a variety of synthetic and real networks. I will conclude with applications of network abstraction in operations research, network visualization, graph neural networks and other.

1. Šubelj, L. “Algorithms for spanning trees of unweighted networks”. PeerJ Computer Science (2021).

2. Šubelj, L. “Convex skeletons of complex networks”. Journal of the Royal Society Interface 15(145),

20180422 (2018).

(This has been rescheduled from the Autumn term).