Process Operations research within the Sargent Centre covers optimization of the operations of existing plants, optimal designs for new plants that take account of dynamic operation at the design stage, management of supply chains and of batch processing. Process Control covers the theory and practice of advanced automation and control with an emphasis on application to the process industries. Applied research covers a broad spectrum including within oil & gas, reaction and absorption, granulation and polymers. Competencies feeding into applications include integration of design; operation and decision making; multiscale modelling; integrated monitoring of processes; electrical and mechanical equipment; and theoretical advances in robust parametric control. A special feature of the programme is the ability to move new theory rapidly towards practical realisation and thus to help the process control sector take early advantage of new developments.

A selection from the broad range of activities in the past year includes:

Model-based optimization and control for processintensification in chemical and biopharmaceutical systems

Process Intensification (PI) is the response to world-wide changes in the chemical/biopharmaceutical industry for specific enduse product properties and stricter energy and environmental constraints. In this framework, the OPTICO Project aims to overcome the limitations on implementing PI by establishing a new methodological design approach for sustainable, intensified chemical/biopharmaceutical plant design. Within this scope, four specific processes (chromatography, polymerization, crystallization, oxidation) are examined in detail; the main focus thereby lies on the formulation of mathematical models of the processes as well as the identification of the bottlenecks of the process which limit its efficiency. Additionally, advanced control strategies are developed in order to enable a robust operation of the process which in return will enable the fulfilment of legal regulations while maintaining high process efficiency.

In collaboration with ETH Zurich and the ChromaCon AG, the Sargent Centre mainly focuses on the optimization and control of a specific chromatographic system, the Multicolumn Solvent Gradient Purification (MCSGP) process. Due to its periodic and highly nonlinear behaviour as well as the limited information available during operation, all model-based control strategies for this system have been unsuccessful so far. Using a multi-parametric programming approach we are aiming to develop not only a theoretical but also a practical approach to tackle this challenging class of problems. Theoretical work include devising new tools and algorithms for state estimation, and the solution of the multiparametric optimization problem. Applications include the use of system identification and model reduction techniques to recast the original model into a simpler form which then can be solved using the multi-parametric framework.

Theoretical advances in multi-parametric mixed-integer programming

The OPTICO project focuses on the theoretical aspects of multiparametric programming. Although the field of multiparametric programming involving only continuous variables has been explored in depth, such an analysis is missing for the hybrid case, where integer and continuous variables coexist in the problem formulation. The development of novel solution strategies for multiparametric Mixed-Integer Quadratic Programming (mp-MIQP) problems is therefore at the core of the work. These strategies are also been considered within control formulations, especially in combination with dynamic programming. This combination has proven to be very efficient for the continuous case, and hopefully will have a similar effect for the hybrid case, opening up solutions for more complex (and therefore realistic) problems.

Novel state estimation techniques and their application in multi-parametric model predictive control

The implementation of explicit/multi-parametric MPC, and in general, MPC, is based on the assumption that the state values are readily available from the system measurements and that we have a clear measurable output with not much noise influence. Since information on the states is not always available due to difficulty to measure all the necessary states or expensive dataacquisition equipment, advanced state estimation techniques are usually required. Dealing with system constraints entails the simultaneous use of moving horizon estimation (MHE) which can be implemented in a multi-parametric fashion. As a consequence the design and implementation of mp-MPC together with state estimators is highly recommended.

Development of multi-parametric controllers for periodic, nonlinear systems

Industrial processes are commonly characterized by highly nonlinear models that follow a periodic operation profile. The demand for high product purity and low energy consumption render the development of advanced controllers, essential, as the process should always operate under optimal conditions that will assure fulfilment of the imposed constraints. It is therefore of vital importance to establish a holistic framework that will allow the development of multiparametric controllers for nonlinear, periodic systems. Ultimately we are aiming to install the controllers on a bench-top experimental setup and test them against the original process.

Bioprocessing facility fit analysis

Due to limited information at pilot scale, facility fit assessments in industry are typically based on mass balance equations and former experience. The project provides systematic simulation tools and advanced decision support methods to predict the full range of possible outcomes of large scale facilities. It allows assessment of the trade-off between unexpected loss and the cost of oversized equipment. It solves facility fit issues arising during transfer of small pilot scale processes into large manufacturing scale facilities. Facility fit issues refer to any failure of requirement (e.g. unexpected mass loss, extra processing time) caused by mismatch of process equipment.

The outcome is a data mining decisional tool using decision tree classification method combined with Monte Carlo simulation for rapid prediction of facility fit issues and debottlenecking existing facilities. The industrially relevant case study demonstrated that this tool can be applied not only to predict the degree of facility fit of existing facilities by exploring the impact of process fluctuations on product mass loss but also to identify debottlenecking solutions worth pursuing with the series of if-then rules of the critical combinations of factors leading to different mass loss levels. The highlights are:

- A data mining decisional tool was created for prediction of facility fit issues

- Monte Carlo simulation was used to mimic biomanufacturing process fluctuations

- The decision tree discovered a set of rules to predict the root causes of mass loss

- Three different debottlenecking solutions were compared for a legacy facility

Furthermore, the innovation of my work has drawn great interest of MedImmune US and by great potential the tool will be applied to their manufacturing facilities in future demonstration projects. The work is a sub-project of the EPSRC Centre for Innovative Manufacturing in Emergent Macromolecular Therapies led with UCL. It is sponsored by EPSRC and pharmaceutical companies including such as GE Healthcare, GlaxoSmithKline, Pfizer and MedImmune. A superstructure optimisation approach for clean water treatment. The water industry in England and Wales is one of the most heavily regulated industries, and water utilities are faced with increasingly stringent targets for the quality of the water received at customers’ taps. The project is addressing the need for optimum functionality of water treatment plants to remain competitive by being able to make predictions on how the plants can be improved.

Due to the complex nature of the overall water treatment process and the interactions between unit operations, work in the literature has so far been focussing solely on the performance optimisation of individual water treatment units. This approach will inevitably lead to sub-optimal overall performance as the operation of one unit operation has an impact on the next, and their operations can therefore not be considered in isolation. A process-wide approach will therefore be of significant benefit to the water industry by increasing the overall process efficiency and thus decreasing plant costs. This is being done by taking into account the interactions between individual processing units to consider the plan as a whole. A design and operating procedure that considers an optimal series of several unit operations, including units which may run in parallel, whilst reducing the number of steps required for a given product quality, will improve the overall plant efficiency.

The use of superstructures, which contain all possible alternatives of a potential treatment network, has proved an effective tool for the synthesis of chemical engineering process flowsheets and for overall plant optimisation. This work addresses the current gap in water research by developing an approach based on superstructures for the synthesis of clean water treatment works through the application of mixed integer optimisation techniques. A systematic framework is presented for the representation of superstructures and derivation of optimisation models in process synthesis. The state task network (STN) and state equipment network (SEN) are proposed as the two fundamental representations of superstructures used to describe the overall plant operation including multiple sources of raw water and water treatment processes. Publications resulting from this work demonstrate that the approach can provide a valuable guidance in clean water treatment process design and operation.

Process Automation Research Programme:

Worldwide, there is a huge base of currently installed process plants and our research finds ways of helping these to run efficiently and smoothly. This is achieved by optimizing the operation of the process and equipment by detection and diagnosis of the root causes of process inefficiencies. The methods make use of all available information, not only measurements from operating processes but also qualitative and connectivity information from process schematics and drawings, plus reasoning from physical first principles.

Process plants also have mechanical and electrical equipment. We look at measurements from the mechanical and electrical sub-systems to understand the whole picture and are also exploring the interactions between a.c. transmission grids and process plants which are large electrical consumers. The work is being undertaken by Imperial researchers, industrial research engineers on secondment and PhD students sharing their time between Imperial and industrial placements with collaborating companies. More information on Process Automation is available at: http://www3.imperial.ac.uk/processautomation

CO2 capture from gas-fired power plants (GAS-FACTS)

The Gas-FACTS project is funded by the EPSRC-Engineering and Physical Sciences Research Council. It started 01 April 2012 and involves a consortium of UK universities. Gas-FACTS will provide important underpinning research in for gas turbine modifications and post combustion capture technologies for gas power plants. These technologies are candidates for deployment between 2020 and 2030, and will be in operation until 2050 or beyond. The challenge with CO2 capture in gas power plants is that gasfired generators provide balancing services to the transmission grid in the presence of intermittent loads and variable generation from renewables. Such variable operation poses great challenges for the control and operation of carbon capture on gas power plants.

The Process Automation group and the Thermophysics group at Imperial are working in the Gas-FACTS consortium on flexible capture systems for natural gas power plants through:

- Real time control of natural gas capture systems for power plants

- Experimental testing of liquid solvents including novel amine mixtures

- Modelling and analysis for improved transient performance

The aim is integration of the gas turbine and post-combustion capture to achieve excellent performance over a wide range of operating conditions, to allow rapid changes in output, to give an appropriate balance between fixed and variable costs, and to give good performance at a range of operating points. To date, a dynamic simulation Is complete and being used to explore operation and control in a range of scenarios.

Operations and Control – Highlighted project

Optimal operation of compressors in networks

It is well known that compressors consume large amounts of energy in different industrial sectors. In particular, compressors are one of the major energy consumers in many intensive chemical processes such as air separation. The focus of the project is to study optimization of the overall system, i.e the system in which multiple compressors operate in networks integrated with chemical process units.

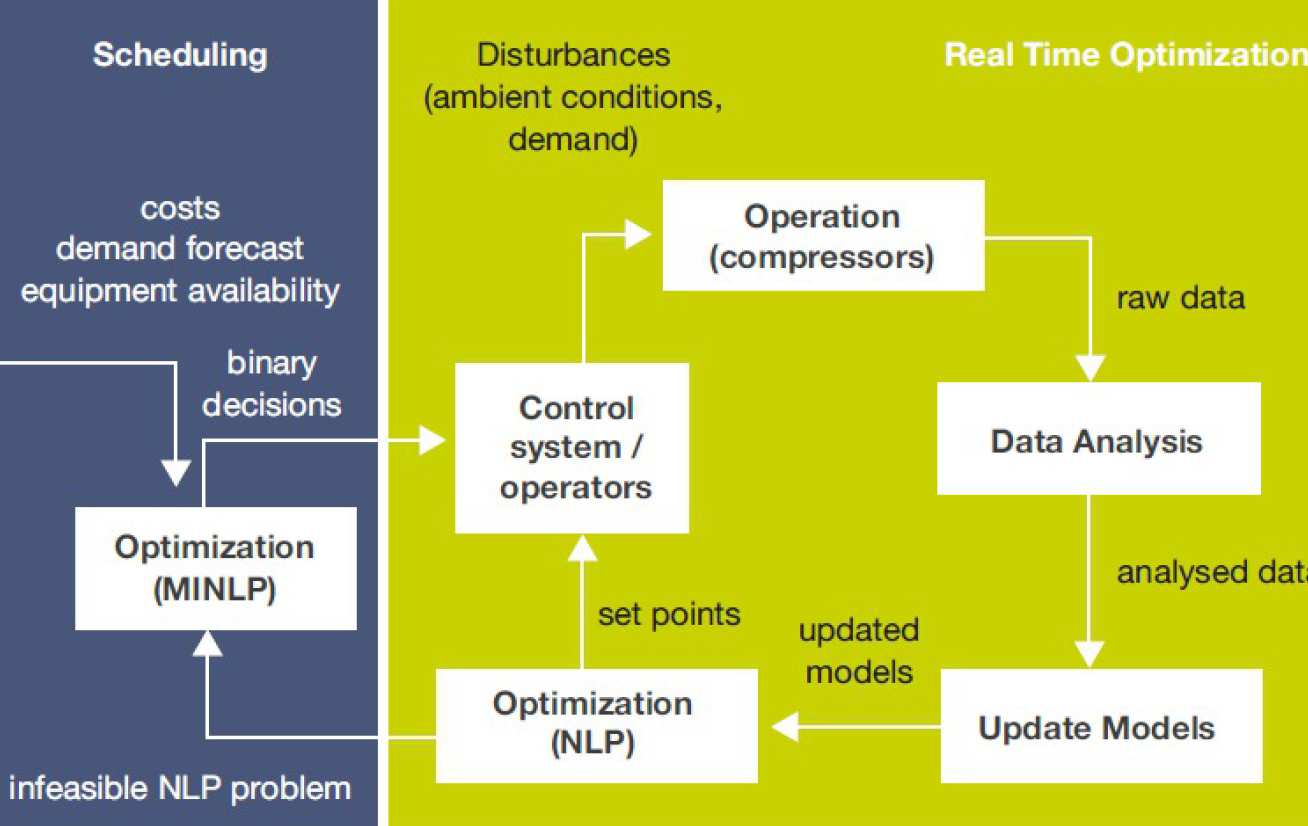

The optimization of compressors leads to two different types of problem: (a) Real Time Optimization (RTO) related to optimal distribution of the load of compressors with a fixed configuration and (b) optimal scheduling and maintenance of compressors considering information for long time periods. The RTO scheme analyses raw data from the process to update models of the compressors to be used in the optimization to estimate the set points of the flows of the compressors which result in a more efficient operation compared to other conventional methods. The estimation of the best set points is called optimal distribution of load and it is also known as load sharing and multi-compressor capacity optimization. By contrast, optimal scheduling, which is given the forecast of the demand and other parameters, computes the optimal configuration of the compressors. Figure 1 shows the framework of these two interacting optimisation problems. Study of the literature revealed a lack of a systematic way to optimally share the load of compressors considering varying operational conditions, such as atmospheric temperature and pressure, and demand. A common assumption is that individual compressors have the same characteristics and the same efficiencies, however many authors and practitioners reported that this is difficult or impossible. These characteristics and efficiencies change over time due to fouling and non-uniform maintenance plans. However, the conventional practice is to distribute the load evenly among the compressors or other similar strategies based on the assumption of constant performance and characteristics over time.

The RTO algorithm in Figure 1 solves the problem of the optimal load sharing. After data collection and conditioning, an NLP optimization problem employs data-driven models to estimate the optimal load sharing in the form of set points of the manipulated variables (opening of the actuators inlet guide vanes) and the controlled variables (mass flow rate). The set points are given to the control system whose role is to apply and keep these points until the next run of RTO.

The scheduling is formulated with as MINLP or MILP and decisions which involve discrete events (for example switching on or switching off a compressor). These decisions are used in the RTO. When the online compressors are not able to meet the requirements of the requested demand (due to disturbances coming from the customers), the scheduling problem updates the models and estimates a new schedule of the compressors which can satisfy the demand. The interactions between RTO and scheduling is part of the ongoing research.

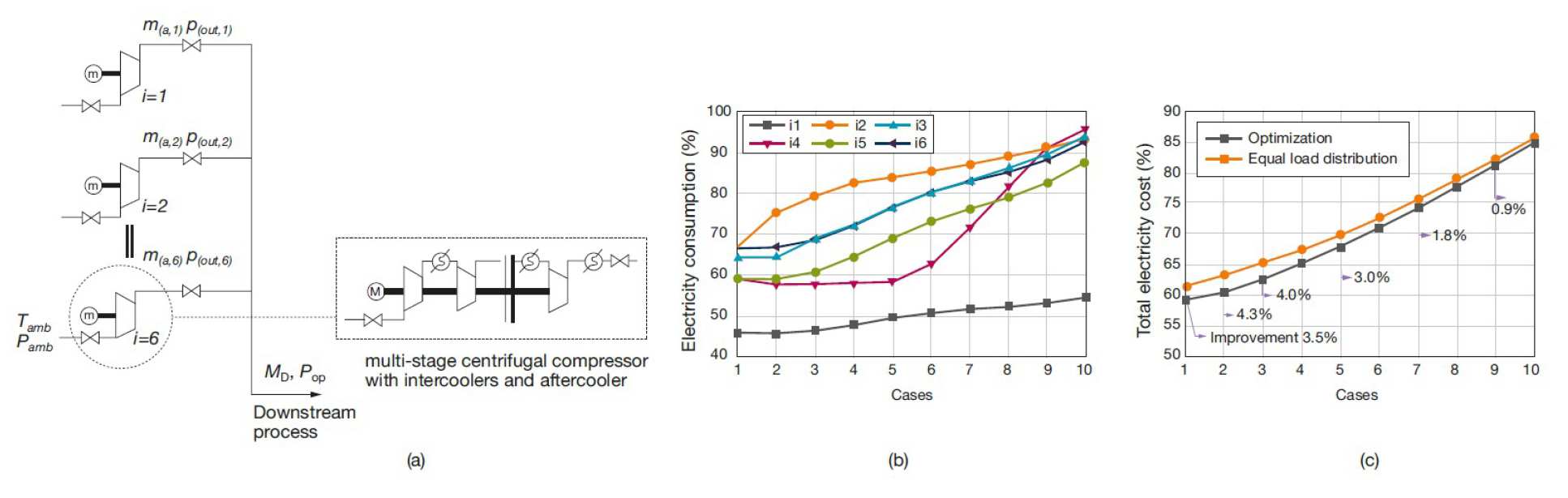

Xenos et al. (2014a) presented the optimization of a compressor station with multi-stage centrifugal air compressors operating in parallel. Figures 2b and 2c show the electricity consumption of each compressor in the optimization case and the comparison of the total electricity consumption between the case of optimization and the case of equal distribution strategy. The study of the optimal distribution of load was examined for ten different cases with different demands. The results showed that the optimal distribution of load reduces the total electricity consumption compared to the case with equal split strategy.

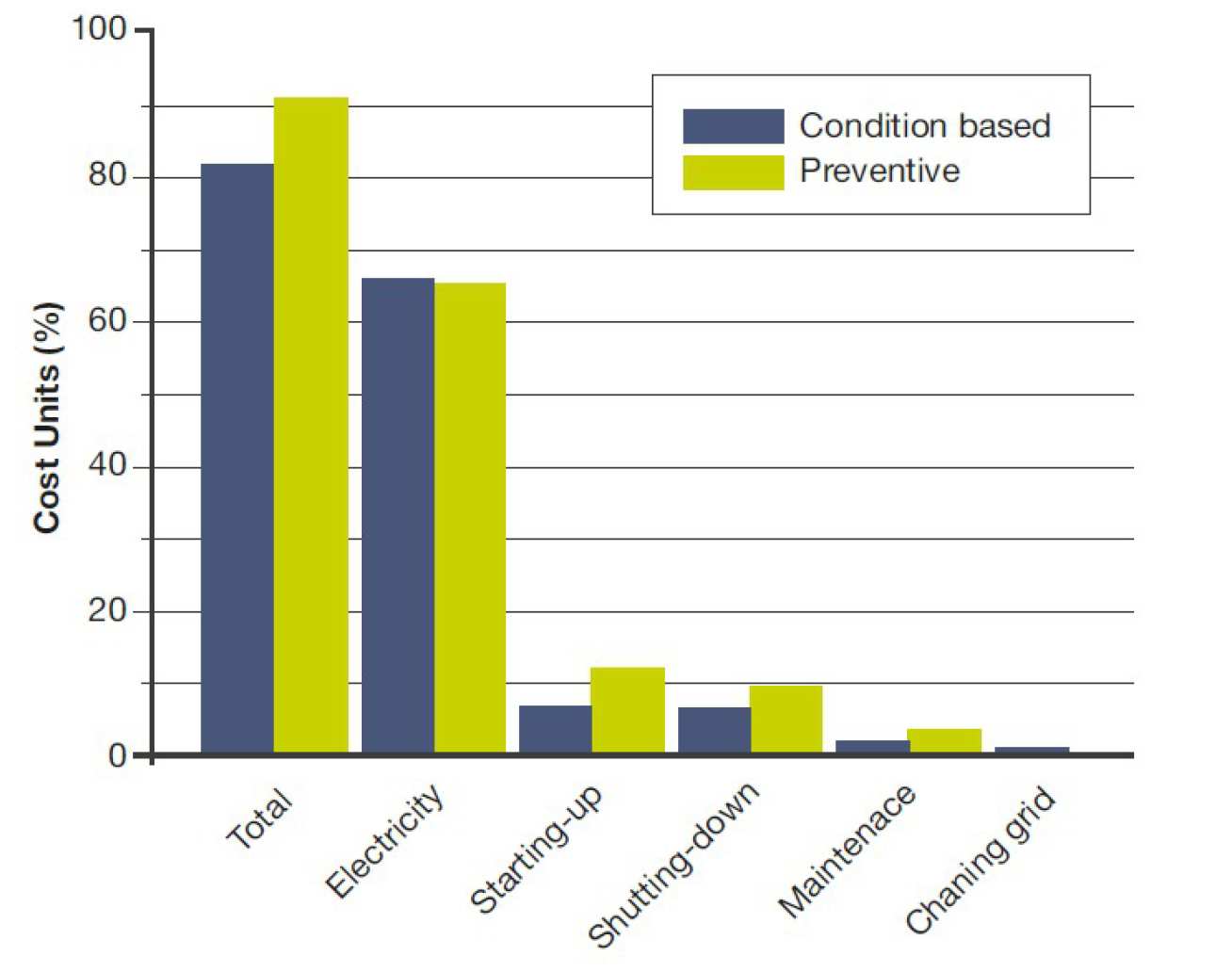

The state-of-the-art of the scheduling of compressor networks examines the optimal operation and maintenance of compressors without considering the gradual degradation of a compressor over time. Xenos et al. (2014b) studied the scheduling and maintenance of compressor networks taking into account the deterioration of the performance of a compressor over time. An illustrative example considering the overall air separation plant (i.e. air compressors, separation units, storage tanks) compared the condition based maintenance optimization and a preventive maintenance optimization strategy. The condition-based maintenance optimization achieved 11% reduction in the overall cost, especially in the start-up, shut-down and maintenance costs compared to the benchmark preventive maintenance strategy (Fig 3).

The work was funded by the Marie Curie Marie Curie FP7-ITN project “Energy savings from smart operation of electrical, process and mechanical equipment – ENERGY SMARTOPS”, PITNGA- 2010-264940, in collaboration with the EPSRC Research Project EP/G059071/1 “Design Toolbox for Energy Efficiency in the Process Industry”.

References

Xenos D.P., Cicciotti M., Bouaswaig A.E.F., Thornhill N.F., Martinez-Botas R., 2014a, Modeling and optimization of industrial centrifugal compressor stations employing data-driven methods, Proceedings of ASME Turbo Expo 2014: Turbine Technical

Conference and Exposition GT2014, June 16-20, 2014, Dussedorf, Germany. Xenos D.P., Kopanos G.M., Cicciotti M., Pistikopoulos E.N.,

Thornhill N.F., 2014b, Operational optimization of compressors in parallel considering condition-based maintenance, Proceedings of the 24th European Symposium on Computer Aided Process Engineering - ESCAPE 24, June 15-18, 2014, Budapest, Hungary.

.png)