Translational AI in Critical Care

What we do

In this research, we apply safety assurance frameworks to AI-based clinical decision support tools, to identify and mitigate safety risks that could lead to patient harm.

Why it is important

Establishing confidence in the safety of AI-based clinical decision support systems is important prior to clinical deployment and regulatory approval for systems of increasing autonomy. Our work provide a use case for the systematic safety assurance of AI-based clinical systems, towards the generation of explicit safety evidence, which could be replicated for other AI applications or other clinical contexts, and inform medical device regulatory bodies.

How it can benefit patients

This study provides a use case for the systematic safety assurance of AI-based clinical decision support systems, which has proven to be one of the many hurdles that slow down clinical deployment and assessment of those tools.

Summary of current research

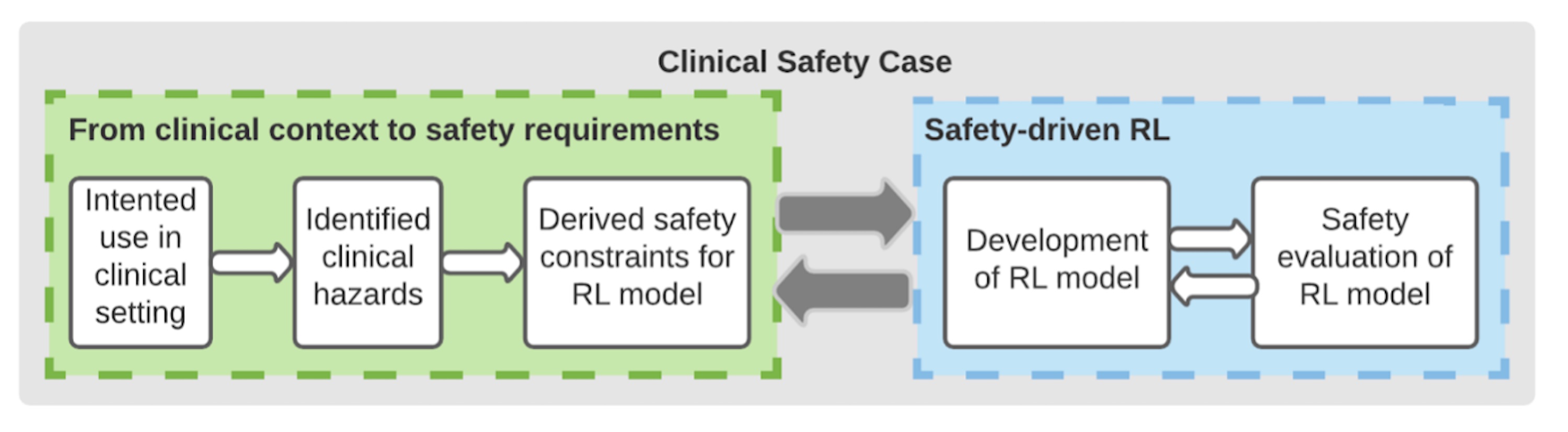

We used expert-defined safety scenarios against which we tested AI-based clinical decision support systems prior to clinical deployment, and compared them to human clinicians’ performance. In the particular case of AI systems based on reinforcement learning (which attempt to maximise rewards), we showed that we could tweak the reward signal to improve reinforcement learning performance within predefined safety constraints.

Information

Funders

- “Assuring Autonomy International Programme” from Lloyd's Register Foundation

- University of York

Collaborators

PhD students

Publications

Levels of Autonomy and Safety Assurance for AI-Based Clinical Decision Systems. Paul Festor, Ibrahim Habli, Yan Jia, Anthony Gordon, A. Aldo Faisal & Matthieu Komorowski. 2021. Part of the Lecture Notes in Computer Science book series (LNPSE, volume 12853)

Enabling risk-aware Reinforcement Learning for medical interventions through uncertainty decomposition. Paul Festor, Giulia Luise, Matthieu Komorowski, A. Aldo Faisal. arXivLabs. doi.org/10.48550/arXiv.2109.07827

Researchers

Dr Matthieu Komorowski

/prod01/channel_2/media/images/people-list/MatthieuKomorowski-.jpeg)

Dr Matthieu Komorowski

Clinical Senior Lecturer

Professor Anthony Gordon

/prod01/channel_2/media/images/people-list/Prof-Anthony-Gordon.jpeg)

Professor Anthony Gordon

Chair in Anaesthesia and Critical Care

Dr Aldo Faisal

/prod01/channel_2/media/images/people-list/Dr-Aldo-Faisal-.jpeg)

Dr Aldo Faisal

Professor of AI & Neuroscience